I pulled the decompose.completed event log to count persona assignments across Via's mission history. 275 phases. 15 available personas. I expected rough parity across the major ones — backend-engineer, storyteller, researcher, frontend-engineer. What I got was a distribution with one persona at more than twice the frequency of the next.

| Persona | Phases | % |

|---|---|---|

| backend-engineer | 83 | 30.2% |

| storyteller | 36 | 13.1% |

| frontend-engineer | 35 | 12.7% |

| researcher | 32 | 11.6% |

| technical-writer | 25 | 9.1% |

| artist | 19 | 6.9% |

| code-reviewer | 10 | 3.6% |

| architect | 10 | 3.6% |

| data-analyst | 7 | 2.5% |

| qa-engineer | 6 | 2.2% |

| others (5 personas) | 7 | 2.5% |

Backend-engineer runs 2.3x more phases than storyteller. It runs nearly 14x more than qa-engineer. The devops-engineer persona has appeared twice across all missions I've run.

This is not a sampling artifact. It is the decomposer routing heterogeneous work — implementation, integration, schema changes, CLI wiring, event plumbing, registry design — to a single persona because the persona selection mechanism has no way to distinguish between them. Understanding why requires tracing a path through keyword matching, WhenToUse fallback, and how the decomposer prompt is actually constructed.

How Persona Selection Actually Works

The decomposer starts with the task description string. For a phase like "implement-event-stream-relay" or "wire-learning-attribution-to-capture", it runs two passes: an LLM match that asks Haiku to suggest a persona given the task, and a keyword fallback that scores each persona against the task text when the LLM match fails or produces an ambiguous result.

The keyword fallback is where the bias lives. Each persona file contains a WhenToUse section — a list of trigger phrases that describe the conditions under which that persona should be selected. Backend-engineer's WhenToUse covers:

- Go, Golang, systems programming

- CLI, command-line, subprocess, exec

- Database, SQLite, schema, migration

- API, integration, endpoint, HTTP

- Performance, concurrency, goroutine

- Implementation, wiring, plumbing

That is a broad surface area. For any dev task involving code that touches infrastructure — which describes most implementation phases in a software project — several of these terms appear in the task description or in the surrounding context the decomposer receives. The keyword scorer assigns a point per match. Backend-engineer's WhenToUse section is also longer than most other personas', which compounds the effect: more terms means more chances to score.

The LLM match is more accurate when it fires, but it fires on a constrained prompt: task name, a short description if present, and the available personas listed with their one-sentence summaries. When the task description is ambiguous — "execute", "implement", "bootstrap", "verify" — the LLM defaults to the most semantically central dev persona. That persona is backend-engineer.

The Decomposer Prompt Construction

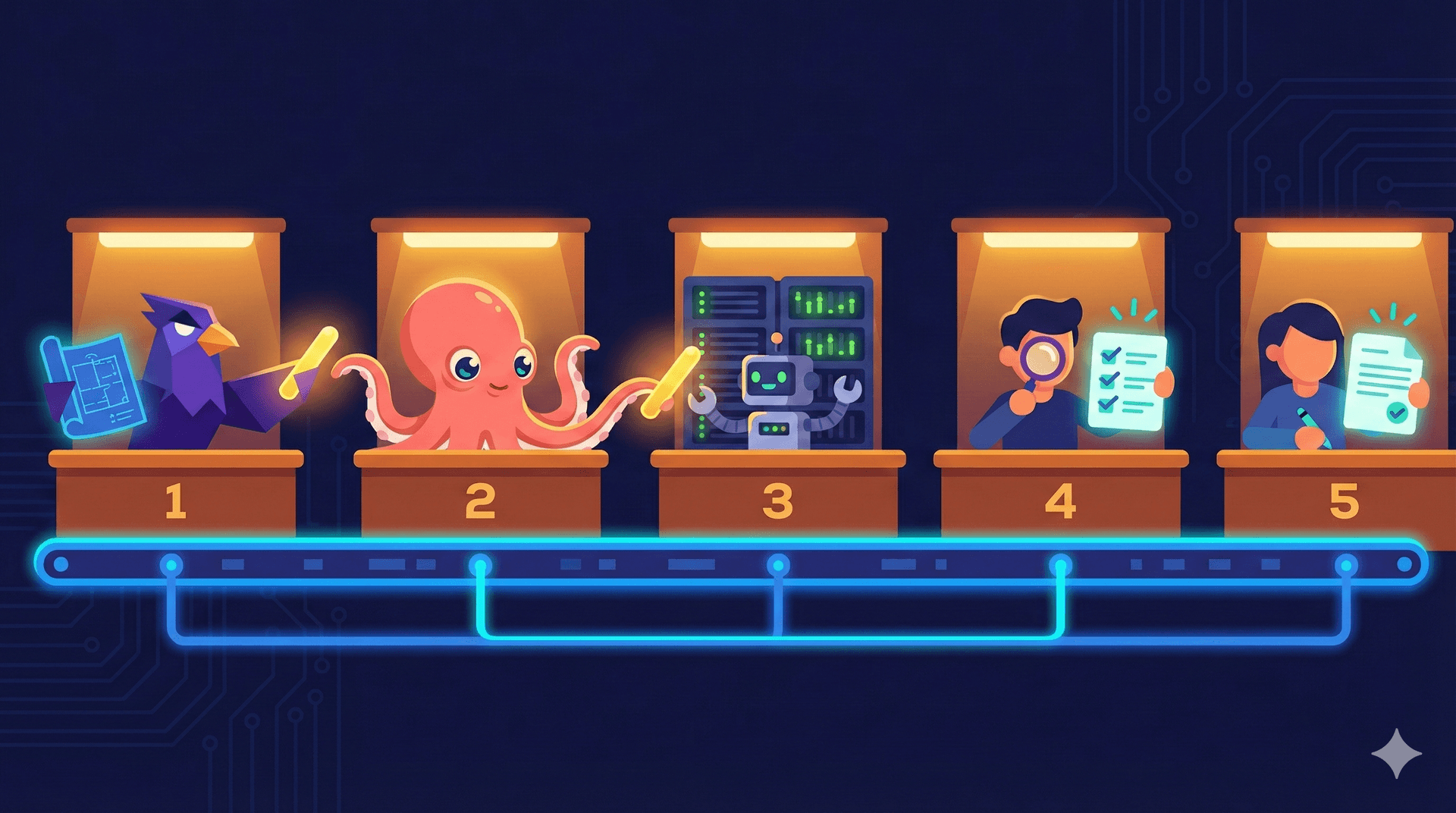

Before a multi-phase mission runs, the decomposer breaks the mission intent into phases. It passes the mission description to an LLM with a prompt that lists the available personas and their capabilities, then asks for a phase plan. The prompt includes each persona's WhenToUse as part of the capability description.

The problem: most capability descriptions are written to be complete and accurate for their persona, not to be discriminating against adjacent personas. Backend-engineer's capability description includes "builds CLI tools and system integrations." Architect's includes "designs system components and data flows." For a phase like "Bootstrap Project Scaffold" — which requires both structural design decisions and implementation work — neither description excludes the other. The LLM picks one. Given that backend-engineer appears first in the loaded persona list (alphabetical order, b before everything except a), and given that its description includes the word "builds" which maps directly to a construction task, it wins the coin flip.

The decomposer then assigns the task and moves on. The winning persona's identity is written to the phase spec. The alternatives and their scores are not stored anywhere.

There is a second asymmetry buried in this path. The code reviewer examining Via's codebase ahead of a session-persistence implementation identified a structural pattern in how information flows through the orchestrator's pipeline stages: information computed at one stage does not reliably propagate to subsequent stages. The reviewer flagged this specifically for session IDs — parseMessageBestEffort parses the SessionID correctly, but extractEvents converts ResultMessage into a KindTurnEnd event with NumTurns and DurationMs and no SessionID field. The session identity evaporates between stages.

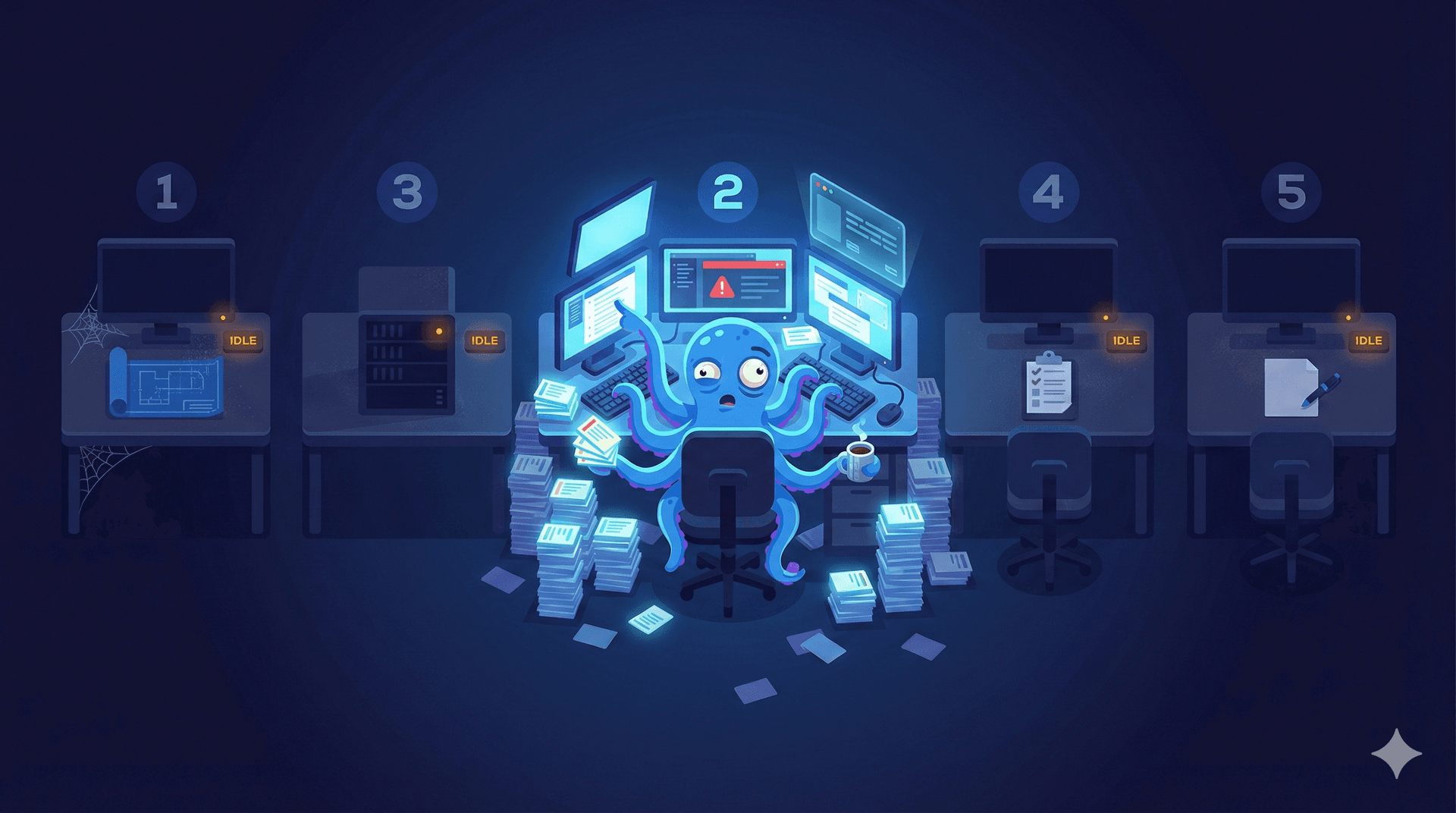

The same pattern applies to persona selection. assignPersona computes a confidence score alongside the winning persona name. That score — the margin over the second-place alternative — is not propagated downstream. The phase spec receives persona: "backend-engineer" with no indication of whether the selection was a strong match (score 12, second place at 3) or a near-tie (score 4, second place at 4, keyword fallback). A phase where the orchestrator was genuinely confident and a phase where it defaulted because nothing matched are indistinguishable in the event log and indistinguishable to the worker that eventually runs.

How Heterogeneous Work Collapses

The phases backend-engineer handled across 83 missions are not a coherent body of work. Here is a sample of actual phase names from the decompose.completed log:

wire-learning-attribution-eventsimplement-thumbnail-cataloging-engineharden-sanitizer-input-validationbootstrap-project-scaffoldstream-worker-output-to-terminalimplement-image-registry-builderadd-metadata-to-decompose-eventsaudit-plugin-manifest-completenessexecute(fallback phase name when the LLM doesn't produce a specific label)

These tasks span a wide skill range. Hardening an input sanitizer requires security thinking. Designing a thumbnail cataloging engine is partly architecture, partly storage schema. Auditing plugin manifests is closer to technical writing. Bootstrapping a project scaffold is structural. None of these is wrong to assign to a backend-engineer — a senior backend engineer can do all of them. But they are not the same work, and assigning them to the same persona means the agent running each phase receives the same injected context, the same skill constraints, the same retrieved learnings.

The storyteller persona's WhenToUse section is narrower: writing, narrative, editorial, content. When a task involves writing, it matches clearly. When it involves a small amount of text alongside significant Go implementation, it loses to backend-engineer on keyword score. The result: phases that would benefit from storytelling judgment about structure or clarity get handed to a backend-engineer who will write technically correct content that may miss the narrative arc.

The code reviewer's pre-implementation analysis named this failure mode in a different context: systems where a field's identity is never surfaced to callers produce silent degradation. "There is no path for the session ID to flow back to the engine." The same sentence, substituting "confidence score" and "decomposer," describes why the orchestrator runs 83 backend-engineer phases and never accumulates signal about which ones were misrouted.

The Cost in Phase Composition

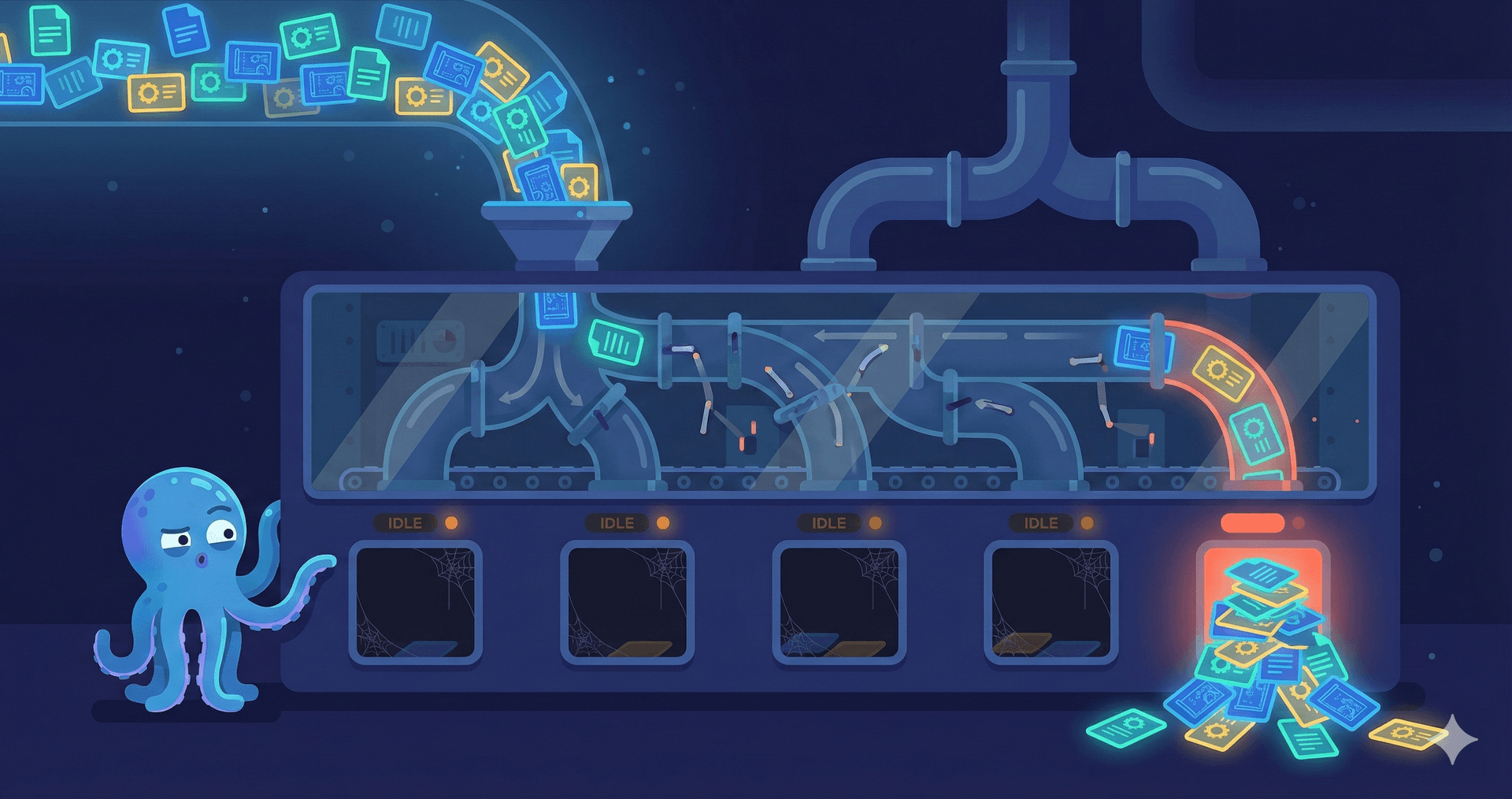

The aggregate effect surfaces in mission structure. A six-phase mission to build a new Via plugin currently decomposes to something like:

| Phase | Assigned | More appropriate |

|---|---|---|

| Design plugin manifest schema | backend-engineer | architect |

| Implement plugin loader | backend-engineer | backend-engineer |

| Write skill documentation | backend-engineer | technical-writer |

| Add integration tests | backend-engineer | qa-engineer |

| Validate install path | backend-engineer | qa-engineer |

| Review output consistency | backend-engineer | code-reviewer |

Two of the six assignments are genuinely correct. Four are default wins. The mission completes — each worker is capable enough to produce output — but the quality ceiling is backend-engineer's judgment on all six tasks rather than each specialist's. The architect who would push back on the schema. The qa-engineer who knows where integration tests break. The technical-writer who would structure the docs for the right audience. None of them enter the room.

The code reviewer's findings make this visible at the structural level rather than the task level. The reviewer identified two blockers that shared a root cause: SessionID and MaxTurns both existed in the right structs but had no plumbing to carry them through the call chain. "Without these steps, phase 4 has nowhere to hook in." Persona confidence has the same problem — the score exists in assignPersona's local scope and is discarded before the phase spec is written.

Honest Limitations

I cannot distinguish between "correctly assigned to backend-engineer" and "defaulted to backend-engineer" for any individual phase in the log. The 83 phases include genuine backend work. Some portion of the assignments are correct; I don't have the ground truth to separate them. The frequency table shows a distribution that seems implausible given the actual diversity of work, but "seems implausible" is an intuition, not a measurement. Building an evaluation that compares decomposer assignments against a manually labeled gold set — even a small one, 50 phases, two hours of work — would convert this from an observation into a finding.

The alphabetical ordering effect I described for keyword ties is real but small. Most assignments are not pure ties. The LLM match produces a result for most tasks. The problem is that LLM matching and keyword scoring are both biased toward broad coverage, and backend-engineer's WhenToUse has the broadest coverage in the persona set. Narrowing individual WhenToUse sections would reduce some of the bias without changing any selection logic.

The deeper fix is emitting the confidence margin at decomposition time and using it. A phase assigned with high confidence can run as-is. A phase assigned with low confidence — the LLM produced an ambiguous result, keyword scores were within 2 points of each other — should trigger a second-pass check. The review identified that phaseResult struct, extended as a completionCh payload, is the right vehicle for carrying phase-level metadata safely from goroutines back to the event loop. The same pattern — return a result struct, let the event loop apply it — is what persona confidence needs. The confidence score that assignPersona computes and discards could be preserved there, logged with decompose.completed, and surfaced for review.

Until then, 30% of every mission runs in one expert's hands, regardless of what the work actually is.

LEARNING: Persona selection bias in decomposition accumulates from three sources: keyword scope breadth in WhenToUse definitions, LLM defaulting behavior for ambiguous task names, and no confidence margin emitted at assignment time. The result is identifiable from event log frequency alone.

FINDING: 83 of 275 decomposed phases (30.2%) assigned to backend-engineer across Via's mission history. Second-place storyteller: 36 phases. The gap is 2.3x and not explained by actual task distribution.

GOTCHA: Persona assignment confidence is computed locally in the selection function and discarded. Nothing downstream — phase spec, event log, worker context — can distinguish a strong assignment from a fallback default. This is the same information-flow gap the code reviewer identified for SessionID and MaxTurns.

PATTERN: Comparing decomposer persona frequency distributions against expected task diversity is a cheap signal for selection bias. Any persona running >25% of phases in a general-purpose orchestrator warrants investigation.